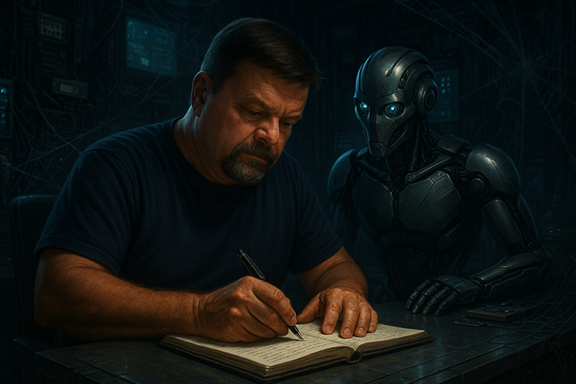

I am picking up this thread from my previous LinkedIn post, allowing us to peel a few more layers of the AI Onion.

I have been reflecting on the advent of Artificial Intelligence, which, contrary to the prevailing paranoia, is neither a looming threat nor an unstoppable force eroding human purpose. AI is simply the next chapter in the long story of engineering evolution. I embraced Integrated Development Environments (IDEs) when they reshaped how we built software, and I embrace AI with the same mindset: optimistic, pragmatic, and grounded in engineering discipline.

Back then, automation and code generation felt revolutionary. Today, we see the same wave, only amplified by Large Language Models (LLMs) capable of generating vast volumes of code at unprecedented pace. And yet, the conversation across the industry remains dominated by speed, productivity, and chasing the latest and greatest model architecture. Speed is intoxicating, but speed without engineering discipline is reckless.

The uncomfortable truth is that AI is enabling us to produce more code than ever before. More complexity, generated more quickly, with continuous evolution. This is not inherently bad, but it is dangerous when paired with weak guardrails, inconsistent engineering practices, and a lack of validation. Our work in the AI Center of Enablement has reinforced this repeatedly. Guardrails, governance, and responsible design matter more than ever.

So the question we should be asking is not, “How fast can AI help us deliver?” It should be, “How do we ensure the solutions AI creates are safe, robust, and trustworthy?”

We Must Refocus on SOLID Engineering

Whether a solution is generated by a human or an AI agent, SOLID engineering principles must anchor everything. Abstractions, boundaries, testability, observability, and maintainability do not vanish simply because a model produced the code. They become more important.

The velocity of AI also means technical debt can accumulate exponentially faster. If we ignore this, we will recreate the same problems of the past at machine speed - my re-occurring nightmare.

AI Will Build Our Solutions Faster

We must accept and embrace that AI will generate code faster than any human team. That is not the threat; that is the opportunity. But this requires a mindset shift:

We are no longer only coding engineers We are becoming prompt, instruction, and validation engineers

Our success will depend less on how efficiently we type and more on how precisely we describe intent. This is aligned with our organisational direction, driving automation, elevating engineering practices, and shifting QA towards deep automation and rapid validation.

Quality Prompts Over Code

Prompt engineering is not cheating or short-cutting. It is engineering!

Clear intent, structured constraints, context awareness, and explicit boundaries are becoming critical skill sets. Just as poor functional requirements produce poor systems, poor prompts produce poor outputs. The AI does not eliminate the need for clarity, but demands more of it.

We Must Validate What AI Does and do so

RapidlyandRelentlessly. We must not slow AI down, but we must keep ithonest.

The validation layers we build must be:

- Automated

- Independent from the AI generating the code

- Fast enough to match AI’s pace

- Opinionated and governed by our guardrails

This includes static analysis, semantic checks, security scans, behavioural tests, and architecture guardrail enforcement. Our internal initiatives, especially the focus on automated quality and engineering guardrails, echo this direction strongly. The future QA role is not “manual tester of AI output”. It is the designer of automated safety nets that keep both human‑authored and AI‑authored code aligned to expectations.

We Must Work With AI, Not Against It AI should not be a bolt‑on tool.

It should be integrated into:

- Code generation

- Documentation

- Test creation

- Architecture validation

- Guardrail enforcement

- Incident analysis

- Technical debt detection

- Resilience checks

- Compliance reviews

We already see this shaping our engineering strategy, where AI is a core lever for improving stability, availability, and quality. [initiative...guardrails | Word] AI amplifies everything — including our weak points. If our processes are broken, AI accelerates the damage. If our engineering is strong, AI becomes a force multiplier.

We Need to Learn to Trust AI and Earn That Trust Through Discipline

Trust is not blind. Trust is engineered.

We need repeatable mechanisms that:

- Prove AI outputs are correct

- Demonstrate predictable outcomes

- Enforce organisational guardrails

- Ensure ethical and secure outcomes

- Provide transparency and observability

Once trust is established, we can delegate more confidently. But trust requires verifiable patterns, not wishful thinking. This is not fundamentally different from how we built trust in compilers, IDEs, test frameworks, or CI/CD automation. It is simply the next step.

Closing Thoughts

AI is not replacing engineers. It is empowering and redefining engineering.

Our responsibility now is to ensure that we evolve our craft:

- from coding to prompting,

- from manual review to automated validation,

- from human‑driven practice to AI‑augmented discipline.

AI accelerates us, if we build the guardrails that keep us on track. Let us not chase speed alone, but pursue quality at speed, anchored in engineering excellence.

Enjoy your favourite brew. I will savour my hot chocolate and raise it to disciplined engineering, sound judgement, and value‑driven progress.